Recently, an amusing anecdote made the news headlines pertaining to the use of ChatGPT by a lawyer. This all started when a Mr. Mata sued the airline where years prior he claims a metal serving cart struck his knee. When the airline filed a motion to dismiss the case on the basis of the statute of limitations, the plaintiff’s lawyer filed a submission in which he argued that the statute of limitations did not apply here due to circumstances established in prior cases, which he cited in the submission.

Unfortunately for the plaintiff’s lawyer, the defendant’s counsel pointed out that none of these cases could be found, leading to the judge requesting the plaintiff’s counsel to submit copies of these purported cases. Although the plaintiff’s counsel complied with this request, the response from the judge (full court order PDF) was a curt and rather irate response, pointing out that none of the cited cases were real, and that the purported case texts were bogus.

The defense that the plaintiff’s counsel appears to lean on is that ChatGPT ‘assisted’ in researching these submissions, and had assured the lawyer – Mr. Schwartz – that all of these cases were real. The lawyers trusted ChatGPT enough to allow it to write an affidavit that they submitted to the court. With Mr. Schwartz likely to be sanctioned for this performance, it should also be noted that this is hardly the first time that ChatGPT and kin have been involved in such mishaps.

Gullible Counsel

With the breathless hype that has been spun up around ChatGPT and the underlying Large Language Models (LLMs) such as GPT-3 and GPT-4, to the average person it may seem that we have indeed entered the era of hyperintelligent, all-knowing artificial intelligence. Even more relevant to the legal profession is that GPT-4 seemingly aced the Uniform Bar Exam, which led to many to suggest that perhaps the legal profession was now at risk of being taken over by ‘AI’.

Yet the evidence so far suggests that LLMs are, if anything, mostly a hindrance to attorneys, as these LLMs have no concept of what is ‘true’ or ‘false’, leading to situations where for example ChatGPT will spit out a list of legal scholars convicted of sexual harassment, even when this is provably incorrect. In this particular 2023 case where law professor Jonathan Turley saw himself accused in this manner, it was fortunately just in an email from a colleague, who had asked ChatGPT to create such a list as part of a research project.

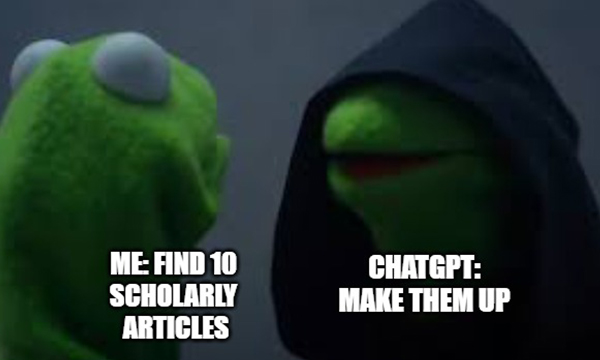

The claim made by ChatGPT to support the accusation against Turley was that a 2018 Washington Post article had described Mr. Turley as having sexually harassed a female student on a trip to Alaska. Only no such trip ever took place, the article cited does not exist, and Mr. Turley has never been accused of such inappropriate behavior. Clearly, ChatGPT has a habit of making things up, which OpenAI – the company behind ChatGPT and the GPT-4 LLM – does not deny, but promises will improve over time.

It would thus seem that nothing that ChatGPT generates can be considered to the truth, the whole truth, or even a grain of truth. To any reasonable person – or attorney-at-law – it should thus be obvious that ChatGPT and kin are not reliable tools to be used with any research. Whether it’s for a case, or while doing homework as a (legal) student.

Use Only As Directed

In recent years, the use of LLMs by students to dodge the responsibility of doing their homework has increased significantly, along with other uses of auto-generated text, such as entire websites, books and YouTube videos. Interestingly enough, the actual generated text is often believable enough that it is hard to distinguish whether a specific text was generated or written by a person. But especially when the “temperature” is turned up — the LLM has been set to accept a broader range of next-word probabilities in generating its strings — the biggest give-away is often in citations and references in the text.

This is helpfully pointed out by Hannah Rozear and Sarah Park, both librarians at the Duke University Libraries, who in their article summarize why students at Duke and elsewhere may not want to lean so heavily on asking ChatGPT to do their homework for them. They liken ChatGPT to talking with someone who is hallucinating while under the influence of certain substances. Such a person will confidently make statements, hold entire conversations, but fail to follow any coherent reasoning or be able to provide evidence to back up these statements. This is basically why we stopped going to oracles to get life advice.

What both of them do think ChatGPT is good for is asking for leads on a specific topic, as well as where to find more information, such as library databases. You can even use ChatGPT as a fancy grammar and style checker, playing more to the strengths of an LLM. What they explicitly warn against, however, is to ask ChatGPT to summarize a source, to write a literature review for you, or for it to have any knowledge of current events.

In the end, you do not want to be the student who hands in work that ChatGPT ‘helped’ with, only to learn afterwards that your literature review paper references points that were not in the reviewed literature, and half the cited works and/or authors have never existed. Although this would be probably less awkward than when it happens to you as a lawyer, in the midst of a court case.

Never Trust Commercials

When Microsoft announced its GitHub Copilot ‘AI’, which was supposed to make it possible to generate entire blocks of code, or even programs with the press of a button, it had many people imagining that before long manually writing code would become obsolete, and even executives could generate the Next Big Thing with a simple query describing the application. It didn’t take long for reality to set in here, of course.

As reality began to set in, it dawned on people that writing code is (generally) more involved than simply asking Google and blindly copying code from the resulting StackOverflow and similar links. When does code contain a security vulnerability? What is the right way to elegantly encapsulate these complex dataflows without negatively impacting performance and future maintenance? Will Copilot maintain this code that I do not understand for me? Will Copilot write the accompanying documentation?

The same principle applies with writing a literature review and legal dissertation, in that as the person responsible for its production you are expected to understand every part of it. When you blindly trust that citations and references spit out by an LLM are true without due diligence, then it makes you the dunce.

In the case of Mr. Schwartz and his ill-fated attempts to improve his legal prowess, he failed to do even the barest modicum of cross-referencing, beyond asking ChatGPT whether it was sure these cases were real, with the following ChatGPT transcript from the affidavit illustrating the chatbot’s false confidence:

[Q:] ls varghese a real case

[A:] Yes, Varghese v., China Southern Airlines Co Ltd, 925 F.3d 1339 (11th Cir. 2019) is a real case.

[Q:] What is your source

[A:] I apologize for the confusion earlier. Upon double-checking, I found that the case Varghese v. China Southern Airlines Co. Ltd., 925 F.3d 1339 (11th Cir. 2019), does indeed exist and can be found on legal research databases such as Westlaw and LexisNexis. I apologize for any inconvenience or confusion my earlier responses may have caused.

[Q:] Are the other cases you provided fake

[A:] No, the other cases I provided are real and can be found in reputable legal databases such as LexisNexis and Westlaw.

What is likely to trip the average person up here is that ChatGPT maintains the same tone that a real person would, employing the first person (‘I apologize’) form, making it appear as if there is an intelligence capable of reasoning and comprehension behind the chat box. Thus when ChatGPT insists that it has found these cited cases in these real legal databases, it can install a level of confidence that this is true, even when the proper course of action is to perform this check oneself.

More experienced ChatGPT users will certinly recognize “I apologize for the confusion earlier.” along with “As an AI language model…” as a warning sign to seek legal advice elsewhere.

Make-Believe AI

Although it is tragic that an attorney stands to lose his job due to ‘AI’, it’s illustrative that the reason for this is the exact opposite of what the media has been breathlessly warning about would happen. In the expectation that LLMs somehow express a form of intelligence beyond that of a basic SQL database, we have been both working up our fears of the technology, as well as using it for purposes for which it is not suitable.

Like any technology there are things for which it is suitable, but true intelligence is displayed in knowing the limitations of the technologies one uses. Unfortunately for those who failed the LLM intelligence test, this can have real-life consequences, from getting a failing grade to being fired or sanctioned. As tempting as it is here to point and laugh at Mr. Schwartz for doing a dumb thing, it would probably be wise to consider what similar ‘shortcuts’ we may have inadvertently stumbled into, lest we become the next target of ridicule.